When DDD clicked

DDD made sense to me much later than I'd like to admit - and only once it stopped being a bunch of words and started solving a real domain problem.

Merge the PR. Close the session. Done. ✅

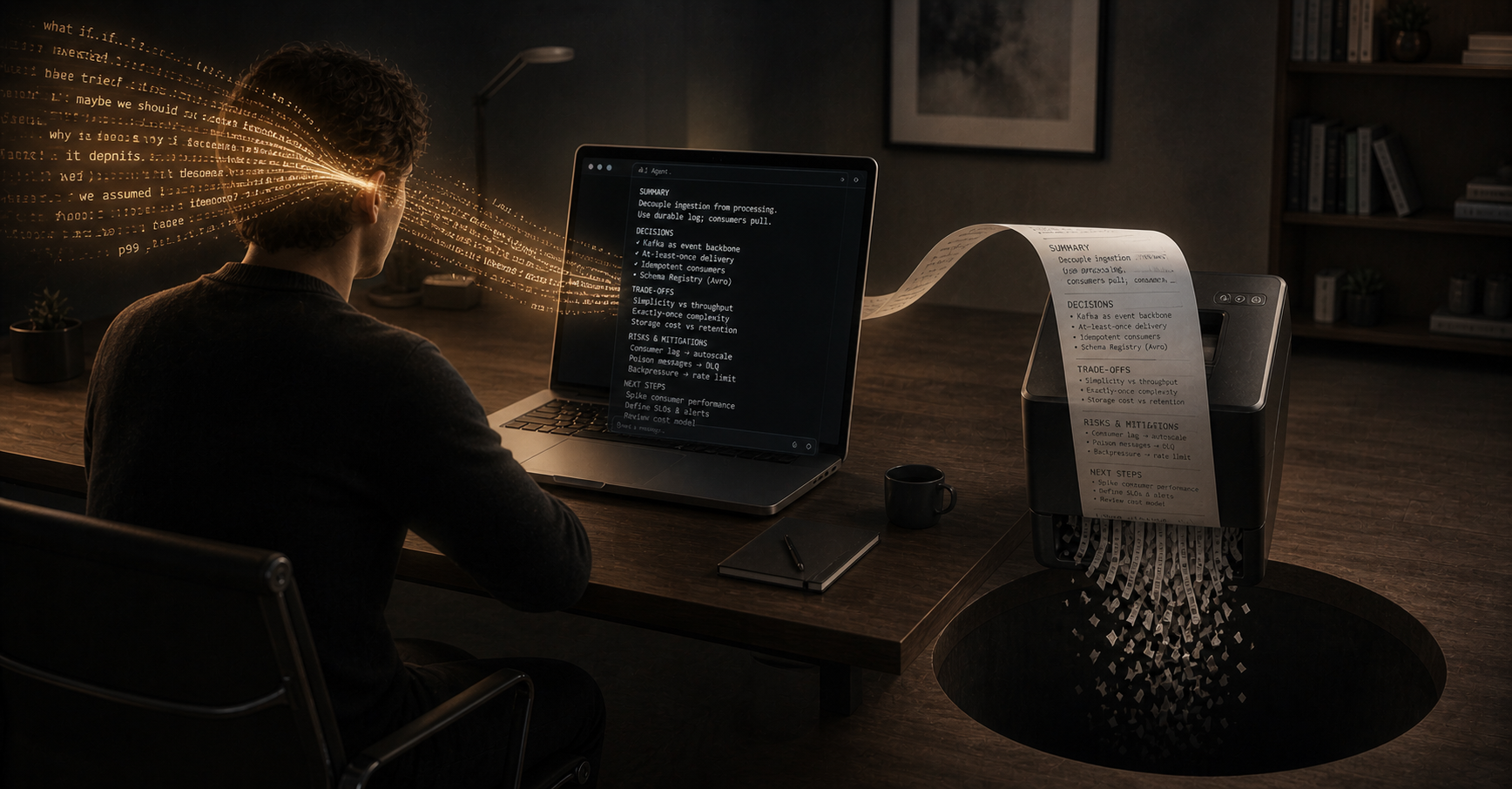

And just like that, some of the best technical thinking you’ve done all week is gone.

It’s becoming a pattern. Every day, we’re having the best technical conversations of our careers - we explore more approaches, ask more “stupid questions”, weigh more trade-offs and catch more edge cases before they ship.

AI makes that possible. It’s an always-on, never-tiring rubber duck that - unlike your colleagues - will not sigh or roll eyes at your questions or ideas. Quite the opposite, it’ll happily entertain everything.

And then? We merge the PR, close the session, and delete it.

Up until very recently, if you wanted to record a decision you made, it meant stopping, context-switching, writing things down manually, and hoping someone would care enough to read it later.

The result? Most teams didn’t bother with it, and to be honest - that was fair. I certainly didn’t bother. Because writing the code used to be the hard part. Capturing the reasoning felt like unnecessary overhead.

That trade-off made sense then.

It doesn’t anymore.

AI has collapsed the cost of implementation. What used to take hours takes minutes.

As a result, we’re shipping faster than ever - more PRs, more changes, more quick fixes, more hacks, more iterations.

But we didn’t scale the one thing that makes fast systems safe to change.

Decision context.

Most systems have the same shape:

That gap used to be manageable when change was slower. Now it compounds - because change is no longer slow.

When you ask “can we remove this?” or “why does this exist?” or “is this safe to change?” - AI looks at your patterns, your naming, your structure and it builds a story.

Sometimes it’s right, but, often, it’s very confidently wrong.

A guess dressed up as an explanation reads EXACTLY like an answer. And without recorded intent, that’s mostly what you’re going to get.

And this isn’t just a “future humans” problem - it’s a future-AI problem too. The thing you’ll use tomorrow to help you change or reason about your code, only has the code to work with. It starts the session as a new joiner in your team - remember how tough that was? If the reasoning was never written down, AI ends up doing what humans do in the same situation: it guesses.

We’ve got the world’s most patient collaborator, and we keep handing it half the picture.

A couple of patterns I see crop up:

Surface-level correctness. The code looks right. The build is green. The PR gets approved. But it doesn’t quite respect a deeper invariant of the system - one nobody wrote down because everyone “just knew” it. That invariant comes back as a bug under real conditions, days or weeks later.

Hidden coupling. A change that seems isolated ends up affecting something else entirely. A downstream service behaves slightly differently. A shared assumption gets broken - one that was talked through in a chat window once and never made it anywhere durable.

These aren’t really AI’s fault - they’re what happens when the system grows faster than its written record. Which it now does, by default.

We’re already doing the thinking!

Working with AI isn’t just code generation - we’re beyond that now. We often explore multiple approaches, analyse pros and cons, correct false assumptions and challenge suggestions. We go back and forth until we land on something we can actually get behind and defend in code reviews.

Something like this:

You thinking about adding a cache for the price lookup - p99 is 2.3s and we're missing SLO. options? AI Two obvious paths: • Redis - shared, scales horizontally, new dependency • In-memory - simple, per-instance, drifts between pods You redis means a new dep for one read path... worth it? AI Probably not yet. Single read path, low blast radius if in-memory goes wrong. What's your tolerance for stale prices? You depends - how do consumers actually read this? AI Checkout reads prices once per cart-build, not per render. Staleness only affects new carts, not active sessions. You ok then <1 min is plenty. need to confirm with product. what if the catalogue grows? AI At 10k SKUs you're fine in memory. Beyond that, revisit. Same when you scale past a single instance.

In many cases, it’s the most informed technical thinking we’ve ever done.

And then we merge the PR. And none of it survives.

If someone picks up where you left off, they might very well get the same suggestion you already rejected - for reasons you can no longer remember. Which is a great way to make the same mistake twice, faster than before.

We were aggressively changing our system to support a specific client.

Every day brought new urgent requests, and our responses were faster than ever, thanks to AI. And my, was it fast. Very fast. Sometimes the first quick fix was so quick, it led to unconsidered scenarios that needed more quick fixes - and this can quickly snowball.

And then days would go by before cracks began to show. When we tried to understand why, we hit a bit of a wall. We had made decisions in the past - good ones (probably). We just couldn’t tell you what they were, or why we made them.

Our logs had fallen out of the retention period. Code had been released, reverted, released again. Behaviour had changed multiple times in ways nobody could fully grasp or reconstruct.

The problem wasn’t bugs in our code.

It was that nobody knew why anything existed. Not the current state, nor the trade-offs we’d made under pressure. Just fragments - and a system that had evolved faster than our understanding.

The reality is, it’s impossible to keep up with the speed of change now. So the answer isn’t to slow down - it’s to change what we capture.

One question, asked before you close the conversation. That’s it. I’m aware this sounds almost insultingly simple.

“Summarise why we chose this approach, what we ruled out, and what assumptions we made.”

You Summarise why we chose this approach, what we ruled out, and what assumptions we made. AI We went with a 60-second in-memory cache for the price lookup, keyed by product ID. Established: • Checkout reads prices once per cart-build, not per render - so 60s staleness only affects new carts, not active sessions Ruled out: • Redis - introduces a new dependency for one read path • No cache - p99 latency was 2.3s, missed the SLO Assumed: • 60s of stale pricing is acceptable to product (confirmed - see PROD-4421) • Catalogue stays under 10k SKUs in memory • Single-instance cache is fine for now (revisit at 5+ pods)

Get the output, and log it.

Paste it into the PR description. Drop it in the ticket. Just, save it somewhere it will outlast the chat window.

That’s it. The reasoning already exists - you just did it. The cost of capturing it is now effectively zero.

The barrier isn’t effort anymore. It’s intent.

Intent is harder than it sounds. There’s no muscle memory for “before I close this, what did I just learn?”. You’ll forget. You’ll close the tab anyway. That’s fine - it’s a habit, not a process change. You don’t need a new tool, a meeting, or a Confluence space. Just one more question, before you close the tab.

I said you don’t need a new tool to start doing this - and you don’t. But if you want it to stick on a team, you can make the artefacts do some of the work for you.

A simple combination:

A PR template with the right sections. Add .github/pull_request_template.md to the repo with headers like What changed, Why, Decisions made, Alternatives considered, Assumptions. Once those sections exist in every PR, filling them in becomes routine.

A Claude command that does the writing. Drop a slash command into .claude/commands/pr-summary.md that takes the current chat and outputs each section in the format your template expects. Now the question - “summarise why we chose this…” - is one keystroke at the end of a session.

One honest caveat: this works best for changes that fit in a single session. If your work spans days or many conversations, you’ll need to capture as you go rather than only at the end. But for the bulk of day-to-day work, the single-session pattern covers a lot of ground.

But where? My (opinionated) view:

Now here’s where it gets interesting. Once the context lives somewhere durable, it becomes available to AI:

If it’s in your PR descriptions, a tool like the gh CLI can scan back through your decision history before making a change.

If it’s in your tickets, an MCP server connected to Jira (for example) can surface relevant decisions at the start of a conversation.

If it’s in your codebase as ADRs, you can point your LLM directly at it - and any future contributor reading those files gets the same context the AI does.

Take it a little further and you’ve got the foundation of something genuinely powerful - a vector store built from your team’s reasoning, queryable before every significant prompt. A RAG pipeline where the context isn’t documentation someone was supposed to write - it’s all of the thinking your team already did.

The format matters less than the habit. Store it anywhere - you can always change the format later. The important thing is that it outlives the session.

If you’ve already adopted a spec-driven approach, you might be reading this thinking - aren’t tools like OpenSpec, Spec Kit already solving this?

I’ve written about some of those tools already - and honestly, quite a bit of what this post is asking for, they do well. A good OpenSpec proposal captures rationale, alternatives considered, and trade-offs accepted. I use it, I like it, and if you’ve adopted one of these seriously you’re already most of the way there.

But there’s still a gap. Even in projects where we decided to use OpenSpec, things fall through.

These tools work for changes that go through a formal process. The gap is everything else. The quick fix. The small refactor. The mid-implementation “actually, wait, what about…” that turns out to matter three weeks later. Most decisions never get a spec written for them. They happen in a chat window, and that’s where they end.

So I see them sitting alongside each other - the specs & changes for heavyweight decisions, and the breadcrumb trail for the lightweight ones.

Not bugs, bad code or failing tests.

Systems where nobody knows why something exists, where AI can’t reliably reconstruct intent, and where every change builds on top of decisions nobody remembers.

I’ve written before about how AI can’t be accountable for what it produces - a human still owns the outcome. But you can’t really own a decision you that you can’t reconstruct. Decision context is part of what makes accountability possible in the first place.

That’s how regressions creep in and you end up with a codebase that moves fast - but can’t be safely understood.

There’s not much worse than a codebase that people are scared to change!

Writing code is no longer the hard part. Understanding it is.

We spent years complaining that documentation was too hard - well, now, it’s easy.

We should be treating decision context as something worth keeping. It’s not overhead, more like infrastructure - we’re going to keep building systems that evolve faster than anyone can explain them, and leaving ourselves breadcrumbs to hint at why we got there is extremely valuable.

For humans, and for the AI we’re relying on to help us.